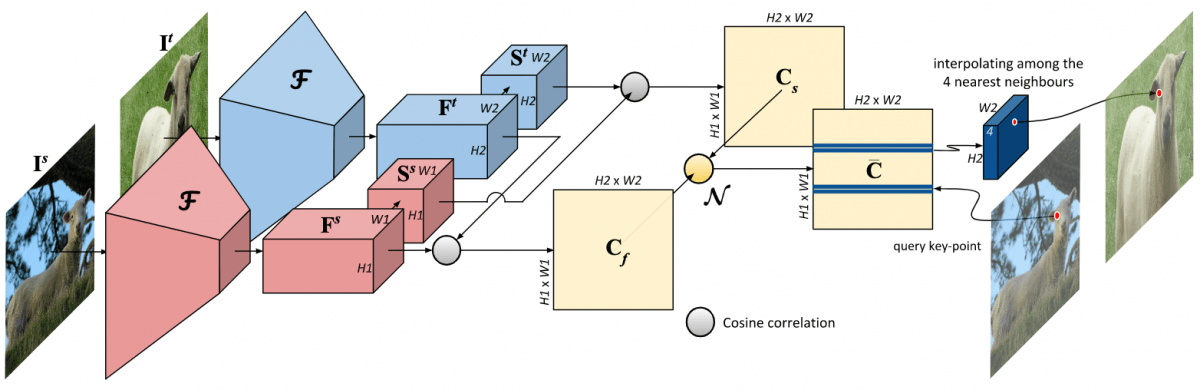

In this project, we tackle the task of establishing dense visual correspondences between images containing objects of the same category. This is a challenging task due to large intra-class variations and a lack of dense pixel level annotations. We propose a convolutional neural network architecture, called adaptive neighbourhood consensus network (ANC-Net), that can be trained end-to-end with sparse key-point annotations, to handle this challenge. At the core of ANC-Net is our proposed non-isotropic 4D convolution kernel, which forms the building block for the adaptive neighbourhood consensus module for robust matching. We also introduce a simple and efficient multi-scale self-similarity module in ANC-Net to make the learned feature robust to intra-class variations. Furthermore, we propose a novel orthogonal loss that can enforce the one-to-one matching constraint. We thoroughly evaluate the effectiveness of our method on various benchmarks, where it substantially outperforms state-of-the-art methods.

Publications

[1] Kai Han, Rafael S. Rezende, Bumsub Ham, Kwan-Yee K. Wong, Minsu Cho, Cordelia Schmid, Jean Ponce

SCNet: Learning Semantic Correspondence

International Conference on Computer Vision (ICCV), 2017. [project page] [code]

[2] Shuda Li*, Kai Han*, Theo W. Costain, Henry Howard-Jenkins, Victor Prisacariu

Correspondence Networks with Adaptive Neighbourhood Consensus

IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020. (* indicates equal contribution.) [project page] [code]

[3] Xinghui Li, Kai Han, Shuda Li, Victor Prisacariu

Dual-Resolution Correspondence Networks

Conference on Neural Information Processing Systems (NeurIPS), 2020. [project page] [code]